We say we don’t trust AI, but our behavior tells a different story.

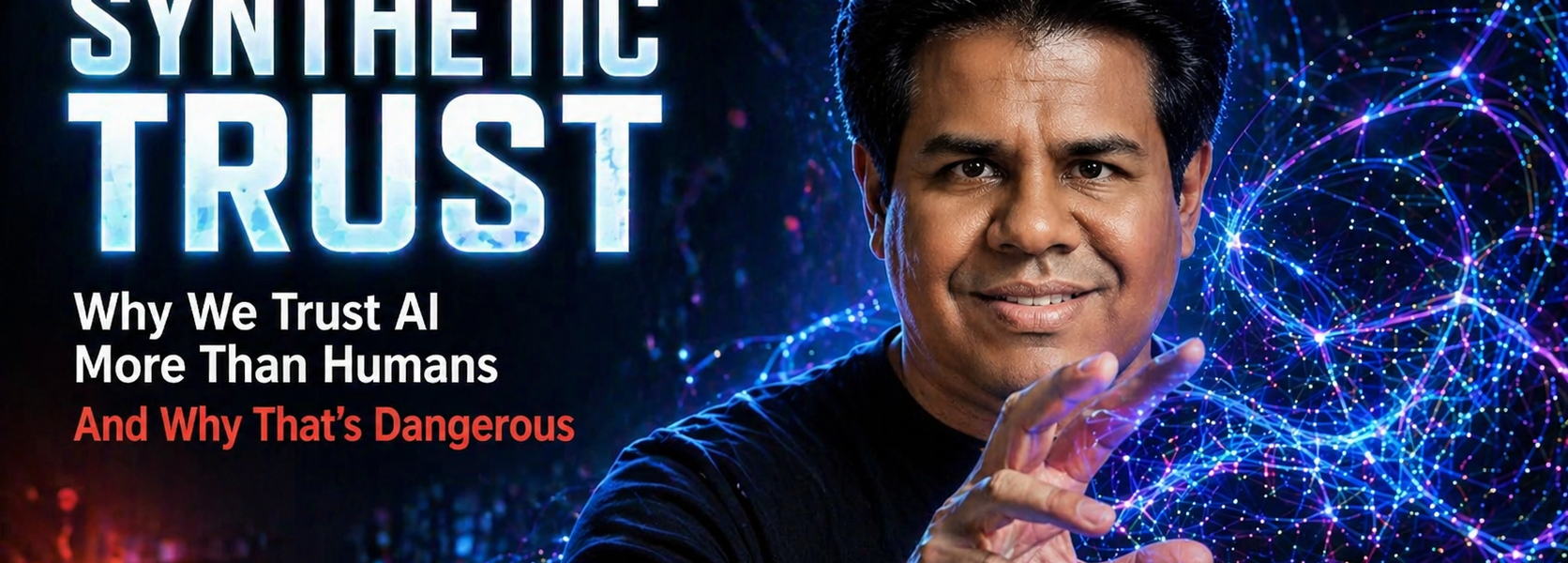

In this episode, Rohit Shetty explores the concept of synthetic trust—a phenomenon where humans place misplaced confidence in AI-generated content simply because it sounds authoritative, fluent, and structured.

Blending cognitive psychology, behavioral science, and real-world case studies, this episode uncovers how AI exploits deeply rooted mental shortcuts to earn our trust.

What You’ll Learn

- The “trust paradox” in AI usage

- 6 hidden trust triggers in AI-generated content

- 5 cognitive biases influencing decision-making

- Case studies across healthcare, law, journalism, and cybersecurity

- How to build your personal AI trust calibration framework

Why It Matters

As AI becomes embedded in everyday decisions—from medical advice to financial choices—the gap between perceived trust and actual reliability is growing.

Understanding this gap isn’t optional anymore. It’s essential.